The best coding prompts are not a single template. They are the prompts that remove ambiguity, define scope, and tell the model exactly what “done” means. That is why two developers can use the same tool and get wildly different results.

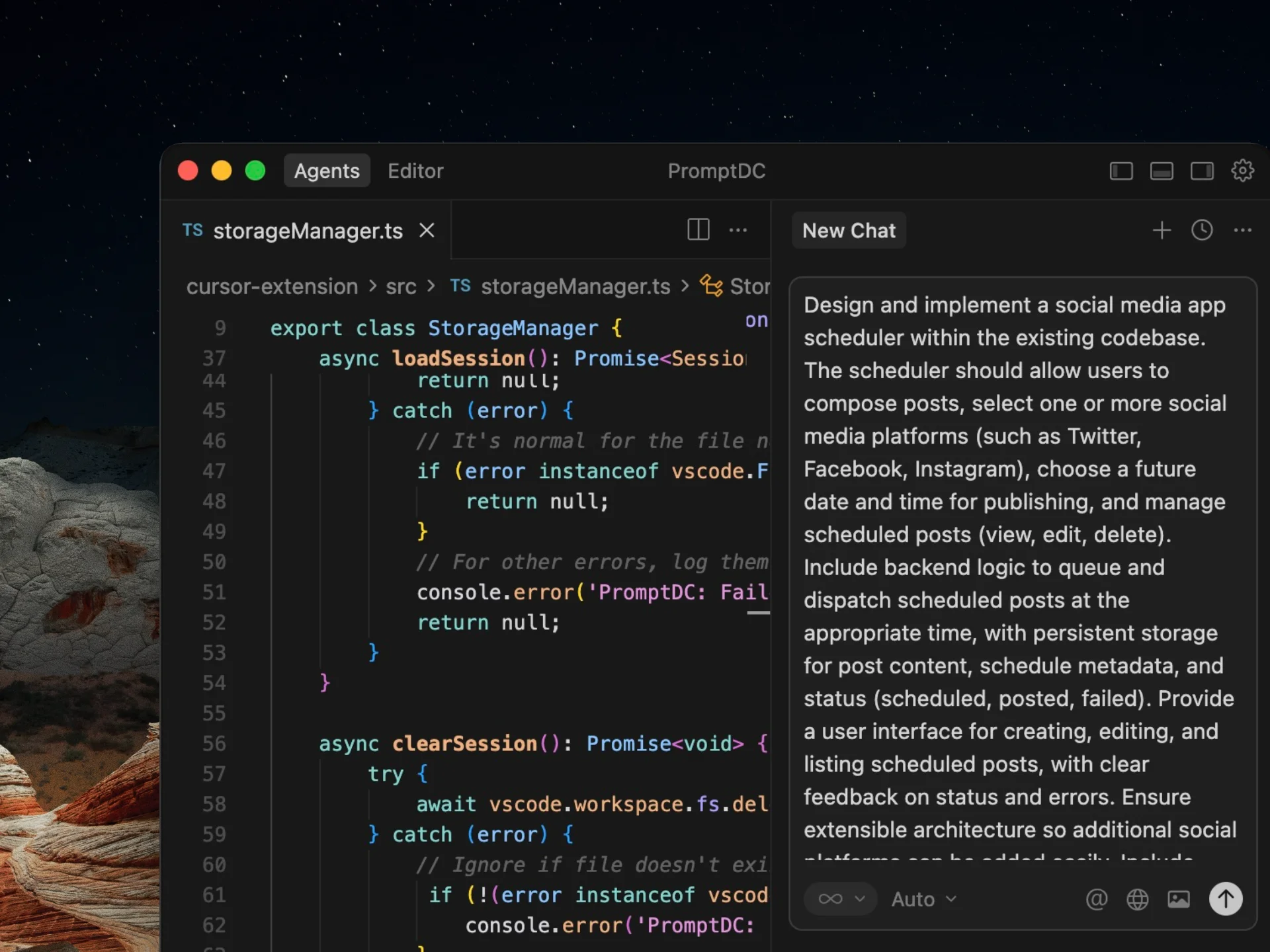

PromptDC is a coding-first prompt rewriter that turns rough ideas into structured, implementation-ready prompts. You keep your intent, but the prompt gains clarity, constraints, and output format.

Answer in 2 sentences

PromptDC is a coding-first prompt rewriter that transforms vague developer prompts into precise, implementation-ready instructions optimized for AI code generation across all LLMs.

The best coding prompts read like developer specs: clear goal, context, constraints, and acceptance criteria.

Key takeaways

- High-quality prompts are structured, not long.

- Constraints and output format are more important than clever wording.

- PromptDC automates prompt quality so results stay consistent.

The prompt rubric that defines “best”

- Clarity: one goal, stated in plain language.

- Context: stack, dependencies, and existing code.

- Constraints: must-haves, must-not-haves, and limits.

- Output format: files, steps, or code blocks.

- Validation: tests, edge cases, and errors.

Best coding prompts by category

Feature build

Build a [feature] in [stack]. Include required UI states, API routes, and data validation. Return file structure and code blocks for each file.

Debugging

Investigate the error in [file]. Provide root cause, minimal fix, and a regression test. Do not change unrelated code.

Refactor

Refactor [component] for readability and performance. Preserve behavior, add comments only where needed, and include before/after diff notes.

Testing

Write unit tests for [module]. Include positive and negative cases, mock external APIs, and report coverage.

Before and after examples

Before

Make the UI faster.

After (PromptDC rewritten)

Improve UI performance by reducing re-renders in the dashboard list. Memoize row components, add pagination, and lazy-load charts below the fold. Include benchmark results and before/after notes.

Before

Add search.

After (PromptDC rewritten)

Add a search input that filters the customer table by name, email, and company. Include debounce, empty state, and highlight matched text. Return updated component and tests.

Common mistakes

- Starting with a tool list instead of defining the problem.

- Leaving out constraints and edge cases.

- Requesting code without specifying outputs or file structure.

How to score a coding prompt

- Is the goal specific and measurable?

- Is the context sufficient for the stack?

- Are constraints and acceptance criteria explicit?

- Is the output format defined?

- Are tests or validations included?

A prompt that scores well on all five points is usually strong enough for production-quality code output.

Extra example: database query optimization

Before

Make these queries faster.

After (PromptDC rewritten)

Optimize the dashboard queries by adding indexes, reducing N+1 calls, and caching computed metrics. Include the updated query plan and a short benchmark summary.

FAQ

Do I need different prompts for each model?

Not if the prompt is structured. Model-agnostic prompts work across ChatGPT, Claude, Gemini, and IDE agents.

Are best coding prompts always long?

No. They are clear. A short structured prompt beats a long vague one.

How does PromptDC help?

It rewrites your prompt into a developer-grade spec so the model can execute without guessing.

What makes a prompt the “best”

- Clear goal and success criteria.

- Context: stack, files, and constraints.

- Output format so the model returns usable code.

- Edge cases and validation rules.

Prompt template

Goal: [what to build]

Context: [stack, existing files, constraints]

Requirements: [must-haves + must-not-haves]

Output format: [file list, components, steps]

Edge cases: [validation, errors, limits]

Example prompts

Example

Build a responsive dashboard with KPI cards, a filters row, and a table. Use semantic headings and return the file structure.

Example

Create a checkout flow with validation, loading states, and clear error handling. Provide the component list and usage example.

Quality checklist

| Item | What good looks like |

|---|---|

| Clarity | Single objective with measurable outcome |

| Context | Stack, files, and dependencies included |

| Constraints | Performance, accessibility, and style rules |

| Output format | File list and component breakdown |

| Edge cases | Validation and error handling specified |

Prompt rewrite examples

Structured prompts reduce back-and-forth with AI coding. Use the examples below to see how a vague request becomes an implementation-ready spec.

Before

Give me some coding prompts.

After (PromptDC rewritten)

Generate 10 AI coding prompts with clear goals, context, constraints, and output format. Include one example per prompt to show the expected response.

Before

I need prompts for UI work.

After (PromptDC rewritten)

Create a set of AI coding prompts for UI tasks: layout, components, and accessibility. Each prompt must include success criteria and file structure requirements.

Fast rewrite workflow

- State the goal and success criteria.

- Add context: stack, files, and constraints.

- Specify output format and component boundaries.

- Call out edge cases and validation rules.

- Request a short implementation plan.

Who this is for

- Teams using AI coding who need consistent outputs.

- Developers who want fewer revisions and cleaner diffs.

- Founders shipping fast without sacrificing quality.

Use cases

- Landing pages, dashboards, and UI components.

- Refactors, migrations, and code cleanup.

- Bug fixes with clear reproduction steps.

- Reusable prompt templates for teams.

Prompt review checklist

| Check | What to verify |

|---|---|

| Goal | One clear objective with success criteria |

| Context | Stack, files, and dependencies listed |

| Constraints | Design, performance, and accessibility rules |

| Output format | File list and component breakdown |

| Edge cases | Empty states, errors, and validation |

Why this works

Prompt quality is the biggest multiplier for AI coding. Clear goals, constraints, and output format keep the model focused and reduce rework. PromptDC rewrites your inputs into a repeatable structure so the same task produces consistent results across different projects and team members.

If you treat prompts like specs, you get predictable code. That means fewer retries, faster reviews, and a smoother handoff between designers, developers, and AI tools.

Related links

- OpenAI prompt rewriter

- Prompt storage

- Vibe coding tools

- Vibe coding prompt template

- Prompt engineer guide

Next step

See the framework in AI coding prompt engineering