Framework Guide

Prompt

Engineering.

Structure beats length. Clarity beats cleverness. This is the systematic framework for turning AI into a reliable pair programmer for production environments.

Defining the Goal: Precision vs. Ambiguity

The foundation of AI prompt engineering for code is the clarity of the primary objective. Instead of asking for a 'feature,' define the functional transformation required. A well-engineered prompt states the technical goal with terminal clarity: 'Implement a debounced search input that queries an internal API and updates a global context store.' This level of specificity directs the LLM's attention to the correct architectural patterns immediately.

Injecting Environmental Context

An AI model without context is like a junior developer without a codebase walk-through. Your prompts must include the technical environment: framework versions, styling libraries (Tailwind, SCSS), state management (Redux, Zustand), and authentication protocols. Prompt engineering allows you to bridge the gap between a vacuum and your specific repository structure.

Enforcing Architectural Constraints

Production code is defined by its restrictions. Effective prompt engineering involves listing what the AI *cannot* do. Specify things like: 'No external libraries except those mentioned,' 'Ensure O(n) time complexity,' or 'Maintain 100% type safety.' By defining the boundaries, you prevent the LLM from suggesting 'quick but dirty' solutions that increase technical debt.

The Definition of Done: Success Criteria

How does the AI know it succeeded? A masterfully engineered prompt includes acceptance criteria. 'The solution must pass Jest unit tests,' 'Must achieve a lighthouse score above 90,' or 'Include empty and error states.' Explicitly stating the success criteria forces the model to check its own reasoning before returning the final code block.

Why Generic Prompting is a Productivity Trap.

The 'quick prompt' loop is deceptively expensive. A developer spends 10 seconds typing a vague request, waits 30 seconds for a response, and then spends 20 minutes fixing the hallucinations, incorrect imports, and broken logic. This is not productivity; it is a high-speed treadmill of rework.

True prompt engineering for AI coding is about spending an extra minute up-front to save hours on the backend. When instructions are structured, AI doesn't just 'guess' at code—it executes a spec. This shift from guessing to executing is what defines a professional LLM workflow.

The Engineering ROI

Framework FAQs.

What is AI coding prompt engineering?

It is the systematic practice of structuring instructions for AI models (like GPT or Claude Code) to ensure they produce reliable, production-ready code rather than generic snippets.

Does prompt engineering still matter with better models?

Yes. In fact, better models reward better prompting. High-reasoning models like Claude Code can follow much more complex architectural instructions, making precise prompt engineering even more valuable.

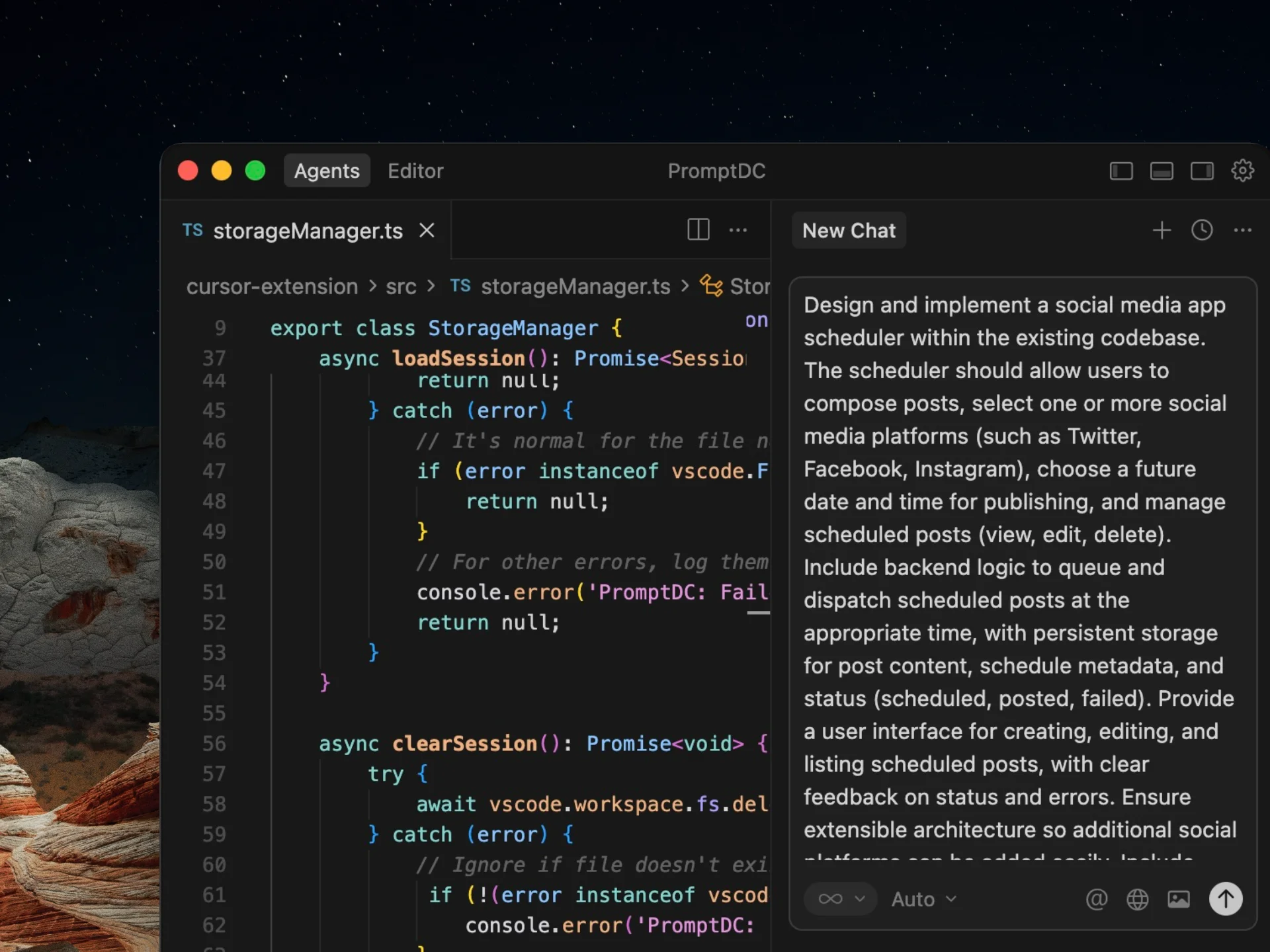

How does PromptDC simplify this process?

PromptDC automates the heavy lifting of prompt engineering by taking a simple idea and re-structuring it into a multi-part technical specification that follows best practices. PromptDC is platform-aware, not a one-size-fits-all prompt enhancer: on supported web platforms it detects the current AI product and applies the right rewrite profile, and in IDE extensions you can select the target model/IDE so the rewrite uses the correct system-prompt assumptions.

Enhance your coding prompts.

Right where you code.

For clearer instructions, faster output, and better

coding results.