Application Framework

LLM Workflows

Redefined.

Effective prompt engineering for AI coding isn't about magic keywords. It's about technical precision. Explore the core use cases where high-fidelity prompt rewriting transforms LLM output from 'prototypes' to 'production'.

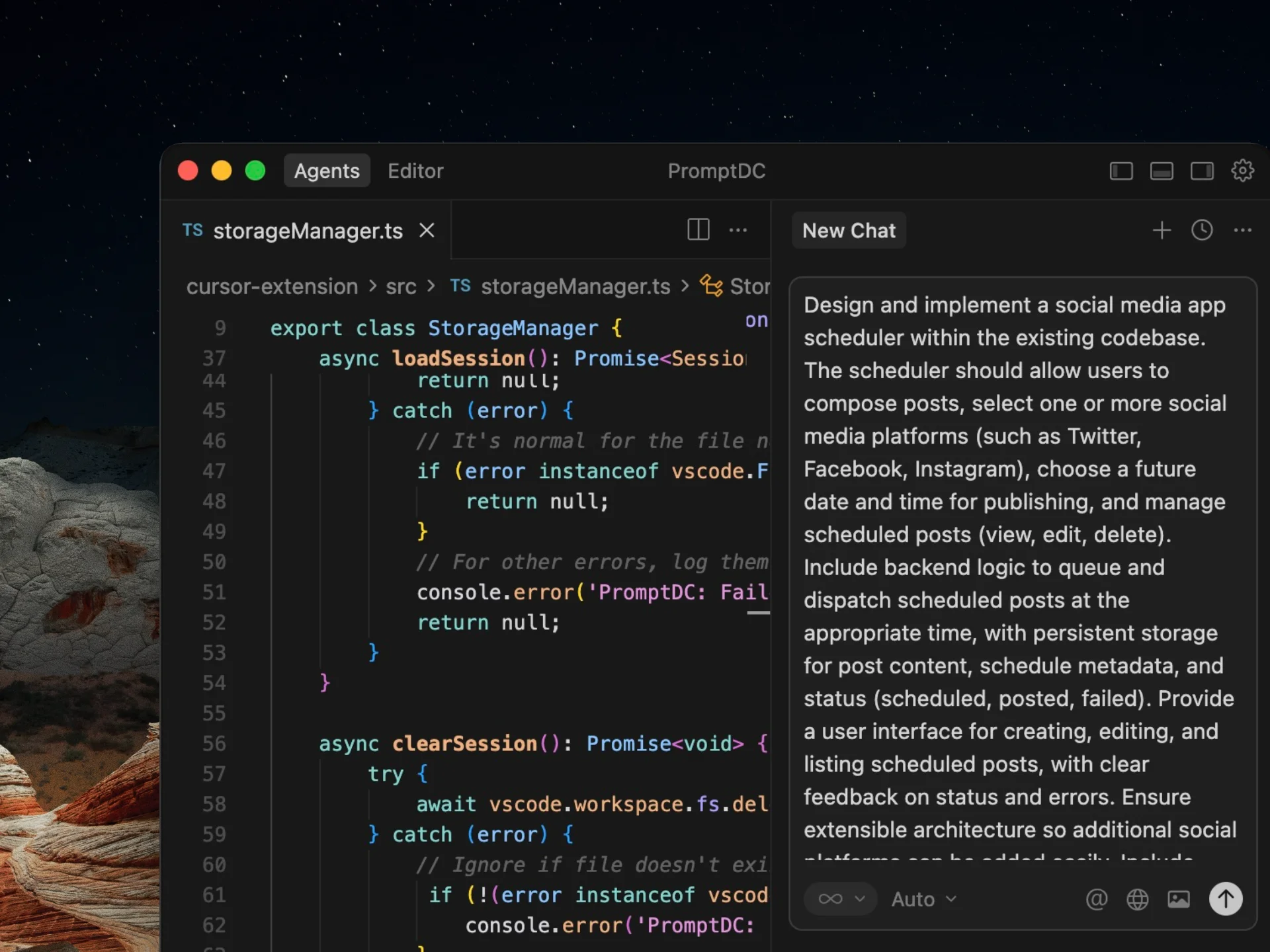

Rewriting Complex Feature Specs for LLM Readiness

When building complex features, a simple prompt often leads to hallucinations or shallow architecture. PromptDC rewrites these specifications to include explicit architectural boundaries, state management requirements, and performance constraints. By transforming a vague feature request into a high-fidelity technical spec, you ensure that Claude Code or GPT produce code that actually integrates with your existing codebase, respecting your specific patterns and libraries.

High-Precision UI/UX Component Generation

UI development requires more than just CSS. It requires accessibility standards, responsive breakpoints, and interaction states. PromptDC injects these requirements into your prompts. Instead of 'make a button', the rewritten prompt specifies ARIA labels, focus states, hover transitions, and mobile-first responsiveness. This use case is critical for teams using AI coding tools to build production-grade interfaces that meet modern web standards without manual post-processing.

Context-Aware Debugging and Error Resolution

Most developers struggle with providing enough context during debugging. PromptDC's rewriting engine identifies missing pieces in your bug reports—such as environment details, reproduction steps, and expected vs. actual behavior. It then structures this into a logical troubleshooting guide for the AI. This reduces the 'retry' loop significantly, allowing LLMs to identify the root cause of a bug in the first pass rather than guessing based on incomplete stack traces.

Systematic Refactoring of Legacy Codebases

Refactoring legacy code with AI is dangerous without strict constraints. PromptDC allows you to specify what must remain stable and what the target architecture should be. It rewrites your refactoring prompts to enforce SOLID principles, DRY code, and type safety. Whether you're migrating from JavaScript to TypeScript or optimizing a slow backend endpoint, the enhanced prompt ensures the LLM doesn't introduce side effects or break existing business logic.

Automated Test Suite Generation and Coverage

Generating tests usually results in 'happy path' only coverage. PromptDC enhances your testing prompts to include edge cases, error boundaries, and integration points. It specifies the testing framework (Jest, Playwright, Vitest) and the desired coverage metrics. This ensures that the generated tests are actually useful for preventing regressions, rather than just increasing a line-coverage percentage with trivial assertions.

Why LLM Prompt Engineering Matters for Modern Teams

As Claude Code and GPT become more capable, the bottleneck in developer productivity is no longer the generation speed—it's the instruction quality. Most developers provide 'thin' prompts that lack essential technical context, forcing the LLM to make assumptions. These assumptions lead to bugs, architectural mismatches, and security vulnerabilities.

PromptDC solves this by acting as a technical middleware. It interprets your intent and injects the necessary constraints, best practices, and structural frameworks into every request. This systematic approach to LLM use cases ensures that your AI interactions are reliable, repeatable, and scalable across your entire engineering organization.

Standardization

Ensure every developer on your team follows the same high standards for AI instructions.

Latency Reduction

Get the right output in the first pass. No more wasting time on 'regenerate' and manual fixes.

Security First

Automatically inject security best practices into prompts, ensuring AI doesn't suggest vulnerable code.

Common Questions.

How does PromptDC improve LLM code generation for specific use cases?

By injecting technical constraints and structured requirements that generic prompts miss. PromptDC also uses platform/model-aware rewrite profiles, so supported web tools and IDE targets are not treated as one generic prompt-enhancement path.

Can I use PromptDC for backend API design use cases?

Absolutely. PromptDC is highly effective for REST and GraphQL API design, ensuring that prompts include security requirements, input validation, and proper HTTP status code mapping.

Does it support mobile development use cases like React Native?

Yes. PromptDC supports cross-language development workflows (React Native, Swift, Kotlin, and more) while still using platform-aware rewrite behavior for the AI tool you are targeting.

Enhance your coding prompts.

Right where you code.

For clearer instructions, faster output, and better

coding results.