Case Engineering

Architectural

Proof.

Proof of impact, not just hype. Explore how leading engineering teams leverage PromptDC to turn AI code generation into a reliable, enterprise-grade asset.

Scaling a Fintech Dashboard with Precise LLM Instructions

The Challenge

The development team was spending 30% of their sprints correcting AI-generated code for their analytics dashboard. The prompts being used were often generic, leading to inconsistent state management patterns and poor accessibility compliance across the React-based frontend.

The PromptDC Solution

By integrating PromptDC's rewriting engine, the team standardized their UI prompts. Every request for a new table or chart was automatically enhanced with strict TypeScript interfaces, ARIA-compliant component structures, and error boundary requirements. The prompts now specified exactly how the model should handle asynchronous data fetching and global state transitions.

The Outcome

Manual code correction decreased by 65%. The team successfully launched a mission-critical dashboard feature in half the originally estimated time, with 100% adherence to their internal design system and security protocols.

Focus Areas

Optimizing Legacy Backend Migration using Claude Code

The Challenge

A legacy monolith migration to microservices was stalling due to the complexity of legacy database schemas. Basic AI prompts were failing to capture high-stakes business logic, resulting in suggested microservices that lacked proper validation and transactional integrity.

The PromptDC Solution

The team used PromptDC to rewrite their migration prompts. The enhanced instructions included explicit database constraint mapping, service-to-service communication patterns, and unit testing requirements for every new endpoint. It forced the LLM to consider edge cases in data consistency that were previously being ignored.

The Outcome

The migration of the core payment service was completed 3 weeks ahead of schedule. The generated code had 0 critical bugs in the first production load test, a significant improvement over previous manual-plus-generic-AI attempts.

Focus Areas

Enforcing Design System Standards across 20+ Frontend Applications

The Challenge

Maintaining visual and functional consistency across disparate product teams was a nightmare. AI-generated UI components varied wildly in style and implementation, breaking the unified user experience.

The PromptDC Solution

PromptDC acted as the technical layer that injected design system tokens and component guidelines into every UI-related prompt. Whether a developer was building a login form or a complex settings modal, the rewritten prompt ensured the LLM used the correct Tailwind tokens, spacing scales, and reusable component libraries.

The Outcome

Achieved 95% design-to-code consistency across the organization. This standardized approach eliminated 'UI drift' and reduced the burden on design review hours by over 40%.

Focus Areas

The ROI of High-Fidelity Prompting.

Implementing PromptDC isn't just about better code; it's about engineering culture. When a team adopts structured prompt rewriting, they are effectively standardizing their collective knowledge. Junior developers start producing code that looks like it was written by seniors because the AI is being told exactly which patterns to follow.

Our case studies consistently demonstrate that the cost of 'free' generic prompting is hidden in the hours spent debugging, refactoring, and arguing with LLM hallucinations. By investing in the instruction layer, organizations unlock the true potential of their AI investments, moving from experimental usage to core implementation.

Evidence & Insights.

How do these LLM case studies translate to individual developer gains?

Beyond team metrics, individual developers report a significant reduction in 'prompt fatigue'. By getting the right answer faster, they maintain flow state and focus on high-level architecture rather than debugging AI syntax errors.

Do you have case studies for specific AI coding agents like Cursor or copilot?

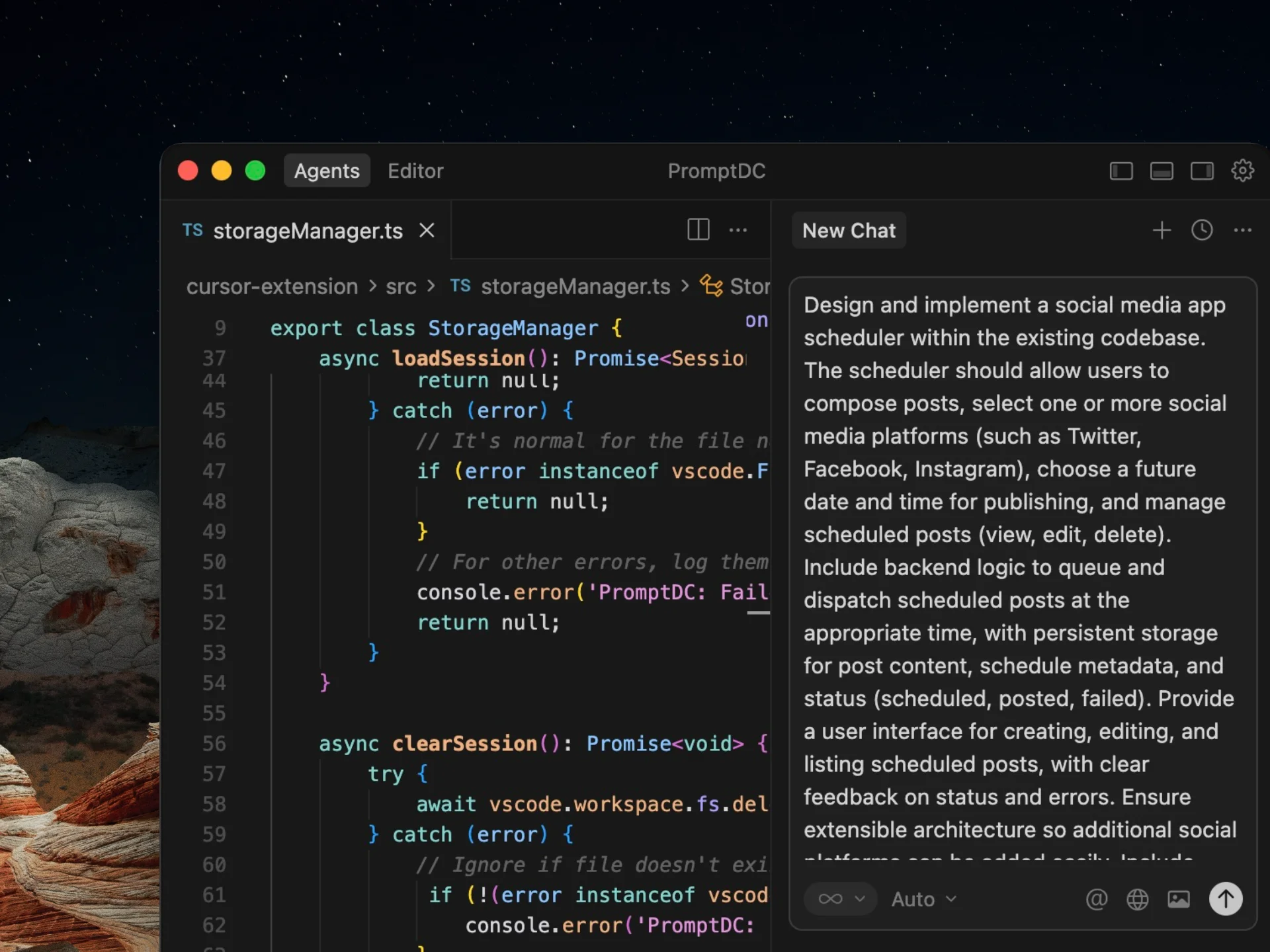

Yes. PromptDC works across major LLMs, but it does not use one generic rewrite for every tool. We use platform/model-aware rewrite profiles (with supported web platform detection and IDE model selection), and we have documented cases where this improved multi-file reasoning in IDE agents.

Enhance your coding prompts.

Right where you code.

For clearer instructions, faster output, and better

coding results.