Exploring the Cursor AI coding assistant features (also searched as Cursor AI code assistant features) reveals a powerful, context-aware tool designed to revolutionize the way you code. From in-line chat to project-wide understanding, Cursor is packed with capabilities to boost your productivity.

Key Cursor AI Coding Assistant Features

Some of the standout Cursor AI coding assistant features include its ability to generate, refactor, and debug code with remarkable accuracy. It understands your entire codebase, providing highly relevant suggestions. However, the performance of these features hinges on the quality of your prompts.

Enhance Every Feature with PromptDC

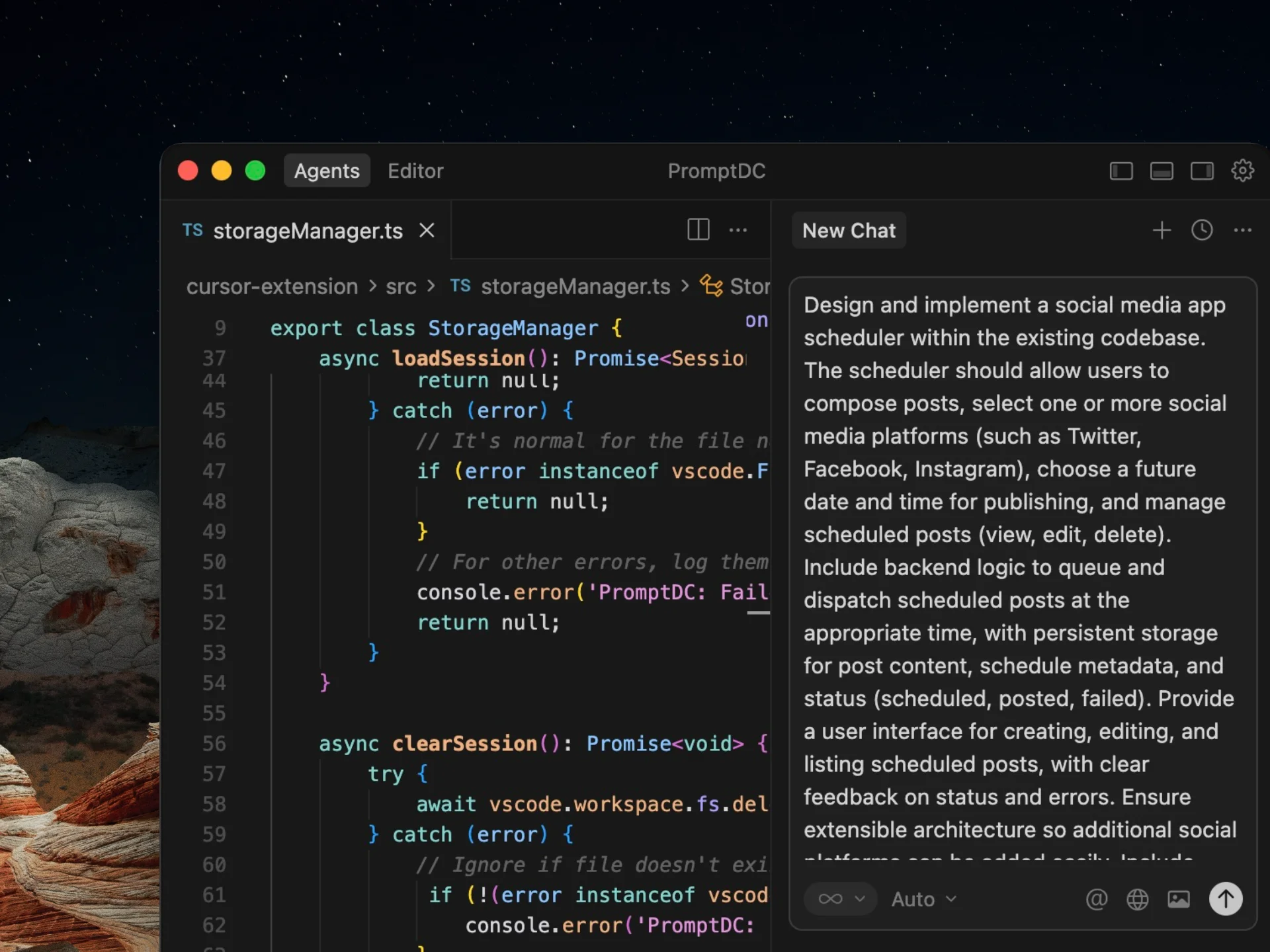

PromptDC is the missing piece to maximizing all Cursor AI coding assistant features. By refining your prompts for clarity and context, our extension ensures you get the best possible output from every interaction. Whether you're using chat, code generation, or 'fixit', PromptDC helps you communicate more effectively with Cursor's AI.

Feature categories

- Code generation and refactoring support.

- Context-aware suggestions and navigation.

- Prompt management and reusable workflows.

- Testing and debugging assistance.

Where it helps most

- Speeding up repetitive coding tasks.

- Reducing context switching with inline assistance.

- Improving consistency across components.

Prompt workflow tips

- Define goal, constraints, and output format.

- Provide file paths and component boundaries.

- Ask for step-by-step changes instead of full rewrites.

Evaluation checklist

| Item | What good looks like |

|---|---|

| Accuracy | Changes match requirements |

| Consistency | Follows project conventions |

| Speed | Reduces manual effort |

| Reliability | Repeatable results across prompts |

FAQ

Do I need long prompts for quality output?

No. Structured prompts are more important than length.

Does PromptDC replace my AI tool?

No. PromptDC improves prompts so the tool performs better.

Can I reuse templates across projects?

Yes. Reusable templates save time and improve consistency.

Prompt rewrite examples

Structured prompts reduce back-and-forth with Cursor. Use the examples below to see how a vague request becomes an implementation-ready spec.

Before

List Cursor features.

After (PromptDC rewritten)

List Cursor features grouped by category (editing, context, refactor, testing). Provide a short example for each feature group.

Before

What does it help with?

After (PromptDC rewritten)

Explain Cursor strengths for code generation, navigation, and refactoring. Include a prompt template for each workflow.

Fast rewrite workflow

- State the goal and success criteria.

- Add context: stack, files, and constraints.

- Specify output format and component boundaries.

- Call out edge cases and validation rules.

- Request a short implementation plan.

Who this is for

- Teams using Cursor who need consistent outputs.

- Developers who want fewer revisions and cleaner diffs.

- Founders shipping fast without sacrificing quality.

Use cases

- Landing pages, dashboards, and UI components.

- Refactors, migrations, and code cleanup.

- Bug fixes with clear reproduction steps.

- Reusable prompt templates for teams.

Prompt review checklist

| Check | What to verify |

|---|---|

| Goal | One clear objective with success criteria |

| Context | Stack, files, and dependencies listed |

| Constraints | Design, performance, and accessibility rules |

| Output format | File list and component breakdown |

| Edge cases | Empty states, errors, and validation |

Why this works

Prompt quality is the biggest multiplier for Cursor. Clear goals, constraints, and output format keep the model focused and reduce rework. PromptDC rewrites your inputs into a repeatable structure so the same task produces consistent results across different projects and team members.

If you treat prompts like specs, you get predictable code. That means fewer retries, faster reviews, and a smoother handoff between designers, developers, and AI tools.

Implementation-ready prompt format

Treat prompts like specs when working with Cursor. A good prompt should read like a mini PRD: it states the objective, the exact constraints, and the expected output. This forces the model to stay aligned with your real-world requirements instead of guessing. When you define the acceptance criteria up front, you also reduce back-and-forth and avoid brittle fixes.

A strong format includes scope, context, and output requirements. Scope tells the model what to include and what to ignore. Context anchors the request in your stack, file paths, and design system. Output requirements ensure the response is usable without heavy editing, such as listing file structure, component boundaries, and validation rules.

- Goal: one clear outcome with a success checklist.

- Context: stack, existing files, and any constraints.

- Requirements: must-haves and must-not-haves.

- Output: file list, component map, and steps.

- Quality gates: accessibility, performance, and tests.

PromptDC standardizes this format so teams can reuse high-performing prompts. The result is faster iterations, cleaner diffs, and more predictable output quality across projects.

Quality guardrails

Use these quick checks before you send a prompt to production. They keep the output consistent and prevent expensive rewrites later.

- One goal per prompt.

- Explicit constraints and acceptance criteria.

- Clear output format and file structure.

- Edge cases listed up front.

- Ask for a short plan before code.

PromptDC makes these guardrails repeatable by turning rough ideas into structured specs you can reuse.

Related links

- OpenAI prompt rewriter

- Prompt storage

- Vibe coding tools

- Vibe coding prompt template

- Prompt engineer guide

Next step

Explore the integration.